Zero-shot time series forecasting with Chronos using Amazon Bedrock and ClickHouse¶

1. Overview¶

The emergence of large language models (LLMs) with zero-shot generalization capabilities in sequence modelling tasks has led to the development of time series foundation models (TSFMs) based on LLM architectures. By representing time series as sequences of tokens, TSFMs can leverage LLMs’ capability to extrapolate future patterns from the context data. TSFMs eliminate the traditional need for domain-specific model development, allowing organizations to deploy accurate time series solutions faster.

In this post, we will focus on Chronos [1], a family of TSFMs for probabilistic time series forecasting developed by Amazon. In contrast to other TSFMs, that rely on LLMs pre-trained on text, Chronos models are trained from scratch on a large collection of time series datasets. Moreover, unlike other TSFMs, which require fine-tuning on in-domain data, Chronos models generate accurate zero-shot forecasts, without any task-specific adjustments.

Recently, the Chronos family of TSFMs has been extended with Chronos-Bolt [2], a faster, more accurate, and more memory-efficient Chronos model that can also be used on CPU. Chronos-Bolt is available in AutoGluon-TimeSeries, Amazon SageMaker JumpStart and Amazon Bedrock.

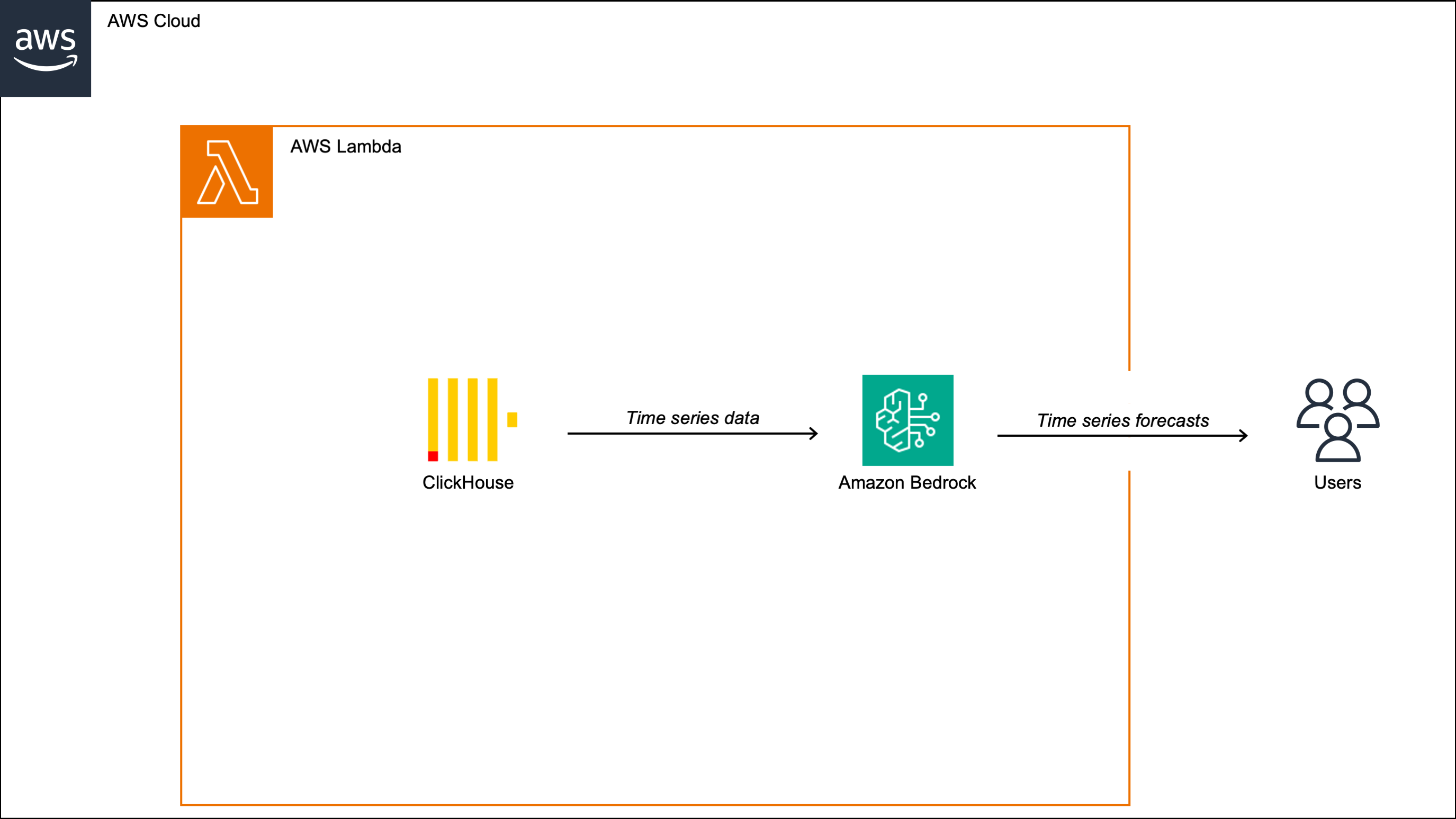

In the rest of this post, we will walk through a practical example of using Chronos-Bolt with time series data stored in ClickHouse. We will deploy Chronos-Bolt to a Bedrock endpoint, then build a Lambda function that invokes the Bedrock endpoint with context data queried from ClickHouse and returns the forecasts.

2. Solution¶

In this example, we work with the 15-minute time series of the Italian electricity system’s

total demand, which we downloaded from Terna’s data portal

and stored in a table in ClickHouse which we called total_load_data.

However, as we are performing zero-shot forecasting without domain-specific tuning,

this solution can be applied to any other time series.

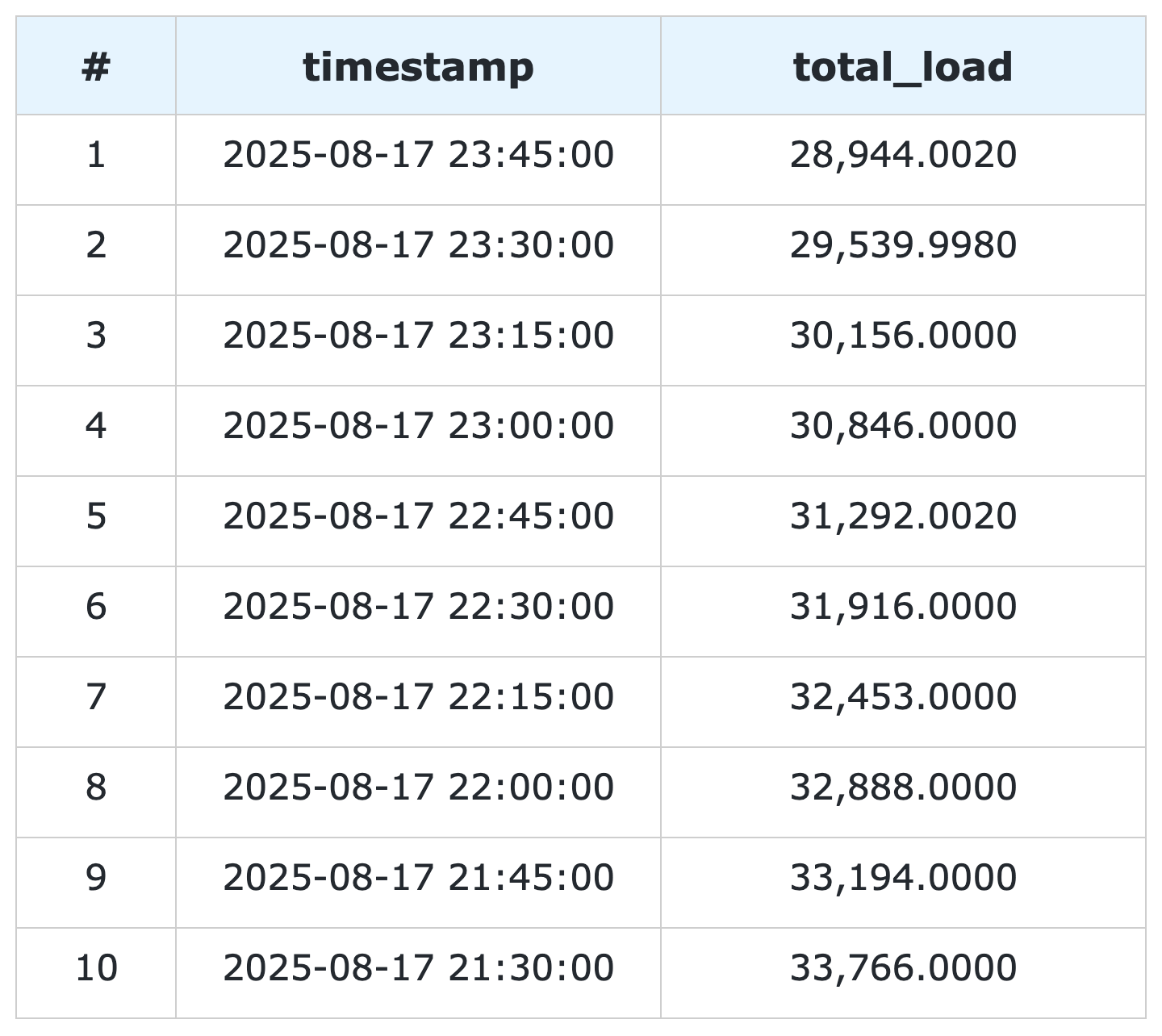

Figure 1:

Last 10 rows of

total_load_data

ClickHouse table.

Note

To be able to run the code provided in the rest of this section, you will need to have Boto3 and the AWS-CLI installed on your machine. You will also need to update several variables in the code to reflect your AWS configuration - such as your AWS account number, region, service roles, etc.

2.1 Create the Bedrock endpoint¶

We start by deploying Chronos-Bolt to a Bedrock endpoint hosted on a CPU EC2 instance. This can be done using Boto3 as in the code below, with the AWS-CLI, or directly from the Bedrock console.

import boto3

# Create the Bedrock client

bedrock_client = boto3.client("bedrock")

# Create the Bedrock endpoint

response = bedrock_client.create_marketplace_model_endpoint(

modelSourceIdentifier="<bedrock-marketplace-arn>",

endpointConfig={

"sageMaker": {

"initialInstanceCount": 1,

"instanceType": "ml.m5.4xlarge",

"executionRole": "<bedrock-execution-role>"

}

},

endpointName="<bedrock-endpoint-name>",

acceptEula=True,

)

# Get the Bedrock endpoint ARN

bedrock_endpoint_arn = response["marketplaceModelEndpoint"]["endpointArn"]

Important

Remember to delete the endpoint when it is no longer needed to avoid unexpected charges. This can be done using Boto3 as in the code below, with the AWS-CLI, or directly from the Bedrock console.

# Delete the Bedrock endpoint

response = bedrock_client.delete_marketplace_model_endpoint(

endpointArn=bedrock_endpoint_arn

)

2.2 Create the Lambda function for invoking the Bedrock endpoint with ClickHouse data¶

We now build a Lambda function for invoking the Bedrock endpoint with time series data stored in ClickHouse.

The Lambda function connects to the ClickHouse database using ClickHouse Connect

and loads the context data using the query_df method, which returns the query output in a Pandas DataFrame.

After that, the Lambda function invokes the Bedrock endpoint with the context data.

The Bedrock endpoint response includes the predicted mean and the predicted quantiles of the time series at each future time step, which the Lambda function returns to the user in JSON format together with the corresponding timestamps.

2.2.1 Create the Docker image¶

To create the Lambda function’s Docker image in Elastic Container Registry (ECR), we need the following files:

app.py: The Python code of the Lambda function.requirements.txt: The list of dependencies that need to be installed in the Docker container.Dockerfile: The file containing the instructions to build the Docker image.

2.2.1.1

app.py

The app.py Python script with the entry point of the Lambda function is reported below.

import json

import boto3

import pandas as pd

import clickhouse_connect

def handler(event, context):

"""

Generate zero-shot forecasts with Chronos-Bolt (Base) Amazon Bedrock endpoint using data stored in ClickHouse.

Parameters:

========================================================================================================

event: dict.

A dictionary with the following keys:

initialization_timestamp: str.

The initialization timestamp of the forecasts, in ISO format (YYYY-MM-DD HH:mm:ss).

frequency: int.

The frequency of the time series, in minutes.

context_length: int.

The number of past time steps to use as context.

prediction_length: int.

The number of future time steps to predict.

quantile_levels: list of float.

The quantiles to be predicted at each future time step.

context: AWS Lambda context object, see https://docs.aws.amazon.com/lambda/latest/dg/python-context.html.

"""

# Create the Secrets Manager client

secrets_manager_client = boto3.client("secretsmanager")

# Retrieve the ClickHouse credentials from Secrets Manager

credentials = json.loads(

secrets_manager_client.get_secret_value(

SecretId="<clickhouse-secret-name>"

).get("SecretString")

)

# Create the ClickHouse client

clickhouse_client = clickhouse_connect.get_client(

host=credentials["host"],

user=credentials["user"],

password=credentials["password"],

port=credentials["port"],

secure=True

)

# Load the context data from ClickHouse

df = clickhouse_client.query_df(

query=f"""

select

timestamp,

total_load

from

total_load_data

where

timestamp < toDateTime('{event['initialization_timestamp']}')

and

timestamp >= toDateTime('{event['initialization_timestamp']}') - INTERVAL {int(event['frequency']) * int(event['context_length'])} MINUTES

order by

timestamp asc

"""

)

# Create the Bedrock client

bedrock_runtime_client = boto3.client("bedrock-runtime")

# Invoke the Bedrock endpoint with the ClickHouse data

response = bedrock_runtime_client.invoke_model(

modelId="<bedrock-endpoint-arn>",

body=json.dumps({

"inputs": [{

"target": df["total_load"].values.tolist(),

}],

"parameters": {

"prediction_length": event["prediction_length"],

"quantile_levels": event["quantile_levels"],

}

})

)

# Extract the forecasts

predictions = json.loads(response["body"].read()).get("predictions")[0]

# Add the timestamps to the forecasts

predictions["timestamp"] = (

pd.date_range(

start=event["initialization_timestamp"],

periods=event["prediction_length"],

freq=f"{event['frequency']}min"

)

.strftime("%Y-%m-%d %H:%M:%S")

.tolist()

)

# Return the forecasts

return predictions

The handler function has two arguments:

event: The input payload with the request parameters.context: The runtime information about the invocation.

In this case, the event object is expected to include the following fields:

"initialization_timestamp": The first timestamp for which the forecasts should be generated."frequency": The frequency of the time series, in number of minutes."context_length": The number past time series values to use as context."prediction_length": The number of future time series values to predict."quantile_levels": The quantiles to be predicted at each future time step.

The context object is automatically generated at runtime and does not need to be provided.

2.2.1.2

requirements.txt

The requirements.txt file with the list of dependencies is as follows:

boto3==1.34.84

clickhouse_connect==0.8.18

pandas==2.3.1

2.2.1.3

Dockerfile

The standard Dockerfile using the Python 3.12 AWS base image for Lambda is also provided for reference:

FROM amazon/aws-lambda-python:3.12

COPY requirements.txt .

RUN pip3 install -r requirements.txt --target "${LAMBDA_TASK_ROOT}"

COPY app.py ${LAMBDA_TASK_ROOT}

CMD ["app.handler"]

2.2.2 Build the Docker image and push it to ECR¶

When all the files are ready, we can build the Docker image and push it to ECR

with the AWS-CLI as shown in the build_and_push.sh script below.

aws_account_id="<aws-account-id>"

region="<ecr-repository-region>"

algorithm_name="<ecr-repository-name>"

aws ecr get-login-password --region $region | docker login --username AWS --password-stdin $aws_account_id.dkr.ecr.$region.amazonaws.com

aws ecr create-repository --repository-name ${algorithm_name}

docker build -t $algorithm_name .

docker tag $algorithm_name:latest $aws_account_id.dkr.ecr.$region.amazonaws.com/$algorithm_name:latest

docker push $aws_account_id.dkr.ecr.$region.amazonaws.com/$algorithm_name:latest

2.2.3 Create the Lambda function from the Docker image in ECR¶

After the Docker image has been pushed to ECR, we can create the Lambda function using Boto3 as in the code below, with the AWS-CLI, or directly from the Lambda console.

import boto3

# Create the Lambda client

lambda_client = boto3.client("lambda")

# Create the Lambda function

response = lambda_client.create_function(

FunctionName="<lambda-function-name>",

PackageType="Image",

Code={

"ImageUri": "<ecr-image-uri>"

},

Role="<lambda-execution-role>",

Timeout=900,

MemorySize=128,

Publish=True,

)

2.3 Invoke the Lambda function and generate the forecasts¶

After the Lambda function has been created, we can invoke it to generate the forecasts. The code below defines a Python function which invokes the Lambda function with the inputs discussed in the previous section and casts the Lambda function’s JSON output to Pandas DataFrame.

import json

import boto3

import pandas as pd

def invoke_lambda_function(

initialization_timestamp,

frequency,

context_length,

prediction_length,

quantile_levels

):

"""

Invoke the Lambda function that generates zero-shot forecasts with Chronos-Bolt (Base)

Amazon Bedrock endpoint using data stored in ClickHouse.

Parameters:

========================================================================================================

initialization_timestamp: str.

The initialization timestamp of the forecasts, in ISO format (YYYY-MM-DD HH:mm:ss).

frequency: int.

The frequency of the time series, in minutes.

context_length: int.

The number of past time steps to use as context.

prediction_length: int.

The number of future time steps to predict.

quantile_levels: list of float.

The quantiles to be predicted at each future time step.

"""

# Create the Lambda client

lambda_client = boto3.client("lambda")

# Invoke the Lambda function

response = lambda_client.invoke(

FunctionName="<lambda-function-name>",

Payload=json.dumps({

"initialization_timestamp": initialization_timestamp,

"frequency": frequency,

"context_length": context_length,

"prediction_length": prediction_length,

"quantile_levels": quantile_levels

})

)

# Extract the forecasts in a data frame

predictions = pd.DataFrame(

data=json.loads(response["Payload"].read())

)

# Return the forecasts

return predictions

Next, we make two invocations: the first time we request the forecasts over a past time window for which historical data is already available, which allows us to assess how close the forecasts are to the actual data, while the second time we request the forecasts over a future time window for which the data is not yet available. In both cases, we use a 3-week context window to generate 1-day-ahead forecasts.

# Define the Lambda function input parameters

frequency = 15

context_length = 24 * 4 * 7 * 3

prediction_length = 24 * 4

quantile_levels = [0.1, 0.5, 0.9]

# Generate the forecasts over a past time window

predictions = invoke_lambda_function(

initialization_timestamp="2025-08-17 00:00:00",

frequency=frequency,

context_length=context_length,

prediction_length=prediction_length,

quantile_levels=quantile_levels

)

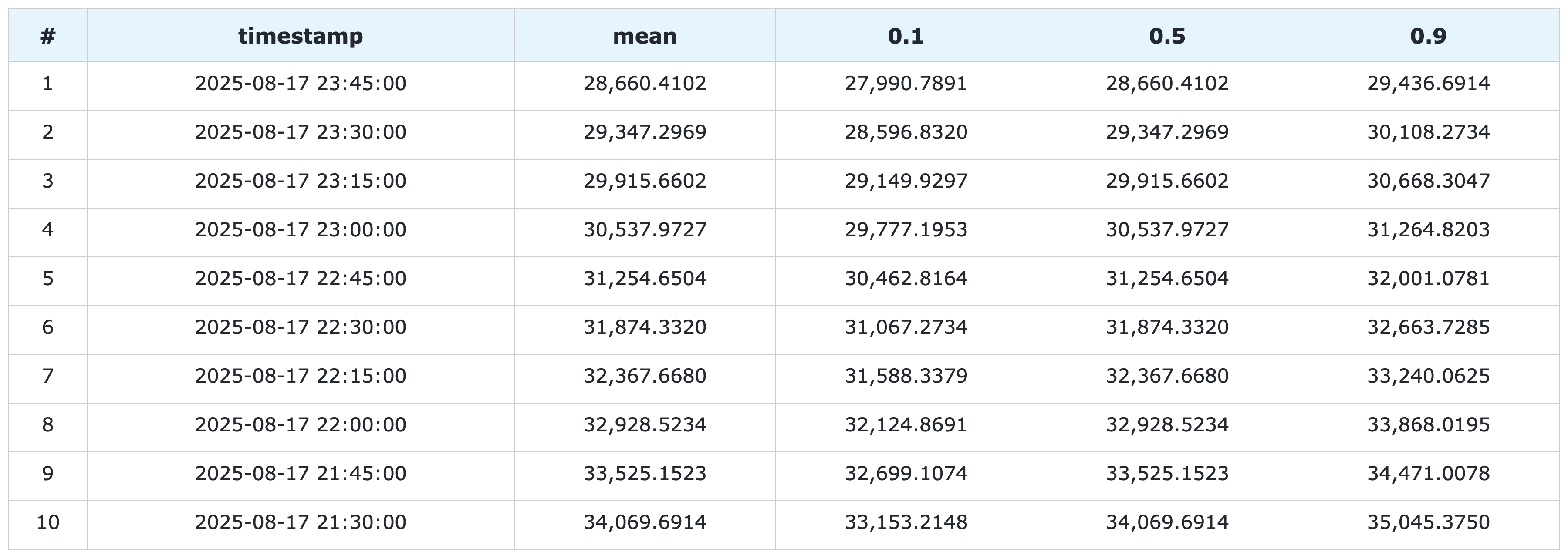

Figure 2:

Last 10 rows of

predictions

DataFrame.

# Generate the forecasts over a future time window

forecasts = invoke_lambda_function(

initialization_timestamp="2025-08-18 00:00:00",

frequency=frequency,

context_length=context_length,

prediction_length=prediction_length,

quantile_levels=quantile_levels

)

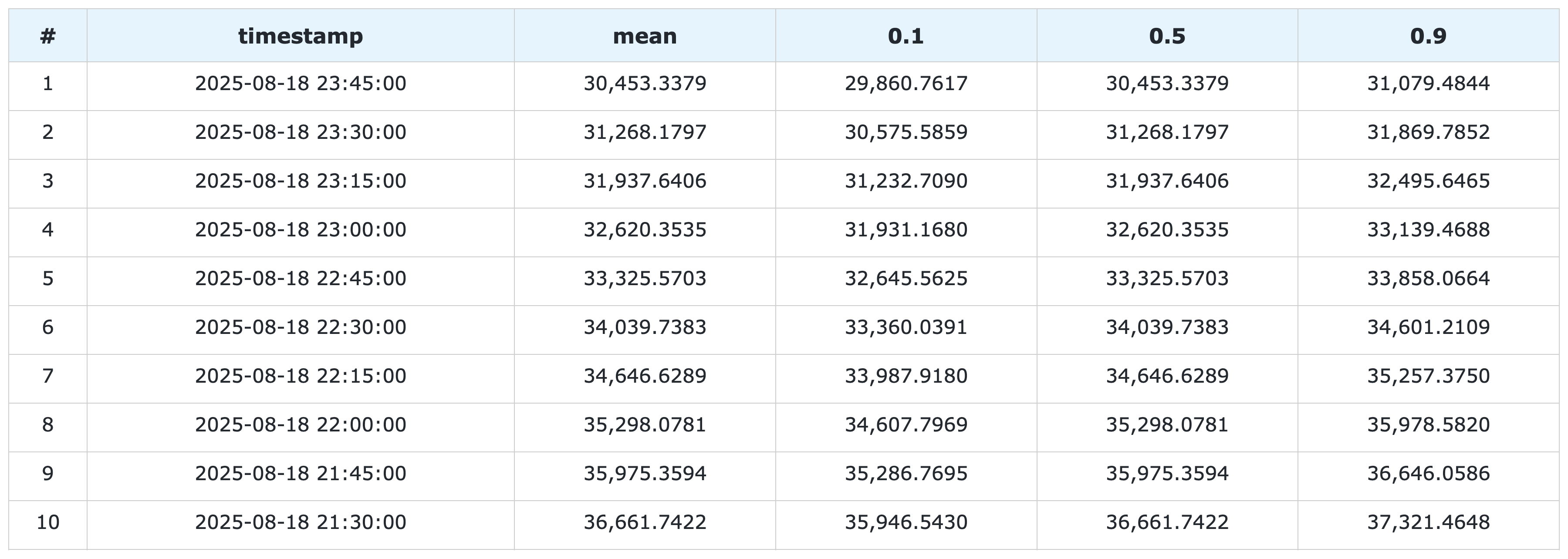

Figure 3:

Last 10 rows of

forecasts

DataFrame.

2.4 Compare the forecasts to the historical data stored in ClickHouse¶

Now that the forecasts have been generated, we can compare them to the historical data stored in ClickHouse. We again use ClickHouse Connect to query the database and retrieve the results directly into a Pandas DataFrame.

import clickhouse_connect

# Create the Secrets Manager client

secrets_manager_client = boto3.client("secretsmanager")

# Retrieve the ClickHouse credentials from Secrets Manager

credentials = json.loads(

secrets_manager_client.get_secret_value(

SecretId="<clickhouse-secret-name>"

).get("SecretString")

)

# Create the ClickHouse client

clickhouse_client = clickhouse_connect.get_client(

host=credentials["host"],

user=credentials["user"],

password=credentials["password"],

port=credentials["port"],

secure=True

)

# Load the historical data from ClickHouse

df = clickhouse_client.query_df(

query="""

select

timestamp,

total_load

from

total_load_data

where

timestamp >= toDateTime('2025-08-18 23:45:00') - INTERVAL 14 DAYS

order by

timestamp asc

"""

)

# Outer join the historical data with the model outputs

output = pd.merge(

left=df,

right=pd.concat([predictions, forecasts], axis=0),

on="timestamp",

how="outer"

)

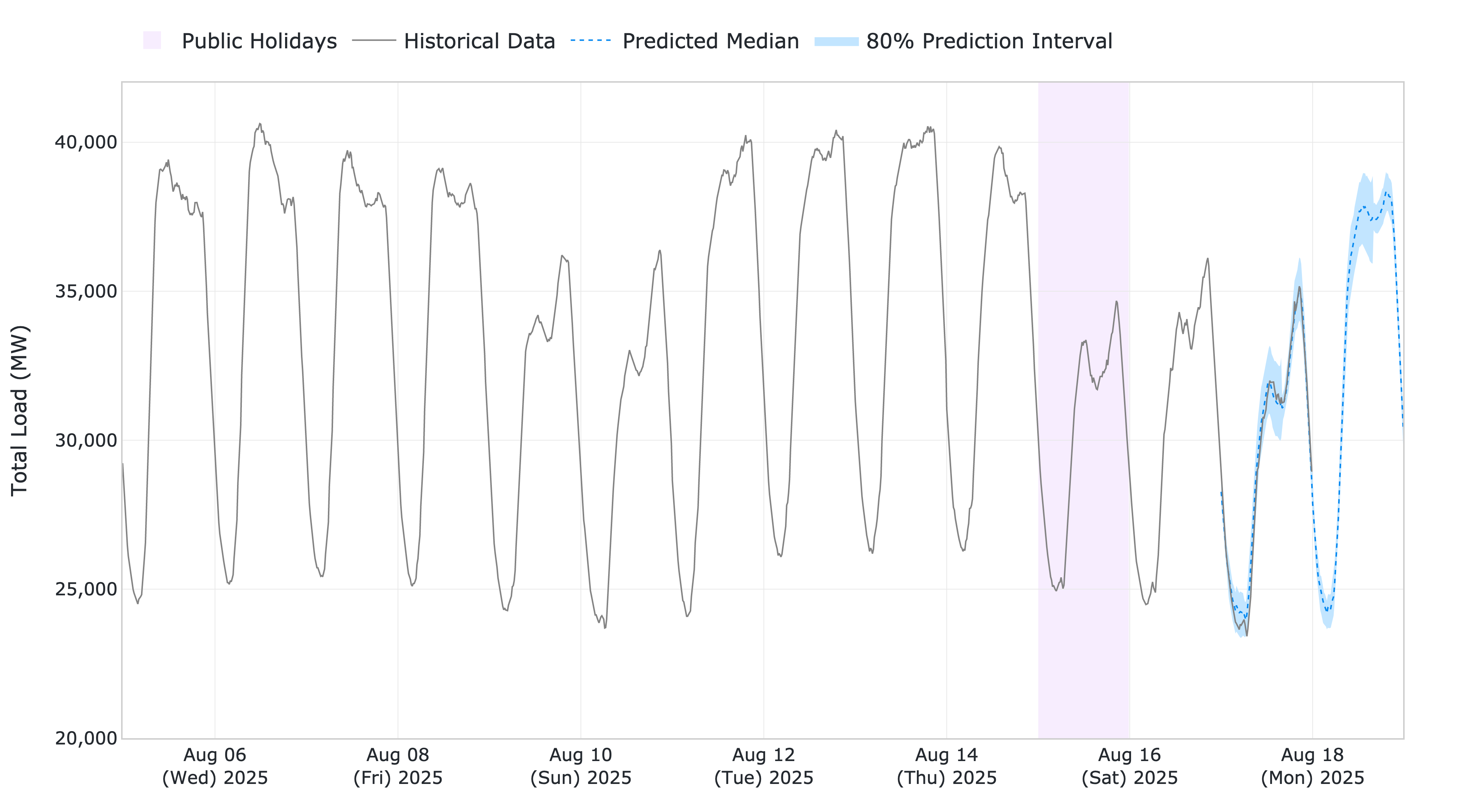

The results show that the forecasts are closely aligned with the actual data, demonstrating the model’s ability to generalize effectively in a zero-shot setting. Despite a holiday occurring on the last Friday of the context window, the model produces accurate forecasts for the subsequent Sunday and correctly anticipates an increase in energy demand on the following Monday, highlighting its strength in capturing complex temporal patterns.

Figure 4: Chronos-Bolt forecasts against historical total load data.

You can download the full code from our GitHub repository.

References¶

[1] Ansari, A.F., Stella, L., Turkmen, C., Zhang, X., Mercado, P., Shen, H., Shchur, O., Rangapuram, S.S., Arango, S.P., Kapoor, S. and Zschiegner, J., (2024). Chronos: Learning the language of time series. arXiv preprint, doi: 10.48550/arXiv.2403.07815.

[2] Ansari, A.F., Turkmen, C., Shchur, O., and Stella, L. (2024). Fast and accurate zero-shot forecasting with Chronos-Bolt and AutoGluon. AWS Blogs - Artificial Intelligence.